AI is no longer an experimental initiative; it is a strategic priority. Yet for many enterprises, the journey from AI pilot to full-scale production remains complex, costly and uncertain. Organizations need AI platforms that are scalable, interoperable, energy-efficient and capable of delivering measurable business outcomes.

HCLTech, in collaboration with AMD, addresses this challenge by delivering a comprehensive Full Stack AI solution by integrating infrastructure, software, ecosystem components and managed services to enable enterprises to adopt AI confidently and at scale.

The enterprise AI reality: Complex, expensive and fragmented

Across industries, organizations face significant barriers when deploying AI and Generative AI in production.

AI workloads demand high-performance infrastructure capable of handling massive parallel computations. This includes accelerators such as GPUs and CPUs, high-memory machines for training large models and advanced networking with high-bandwidth interconnects. These environments also require sophisticated cooling and power management systems.

Beyond hardware, enterprises must manage a complex software stack, ML frameworks, accelerator drivers, data engineering pipelines, Kubernetes-based container orchestration and model-serving platforms such as Triton and ONNX Runtime, all optimized to work together seamlessly.

Even when the technology is in place, converting AI investments into measurable ROI remains difficult. Many organizations struggle to scale large language models (LLMs) and multi-agent systems efficiently due to

- GPU underutilization

- Lack of distributed orchestration

- Limited hardware-software integration.

Additionally, proprietary AI ecosystems often create vendor lock-in, limiting flexibility and increasing long-term risk. At the same time, fragmented infrastructure leads to unpredictable costs, idle GPU capacity and limited resource visibility.

A full-stack AI solution built for enterprise scale

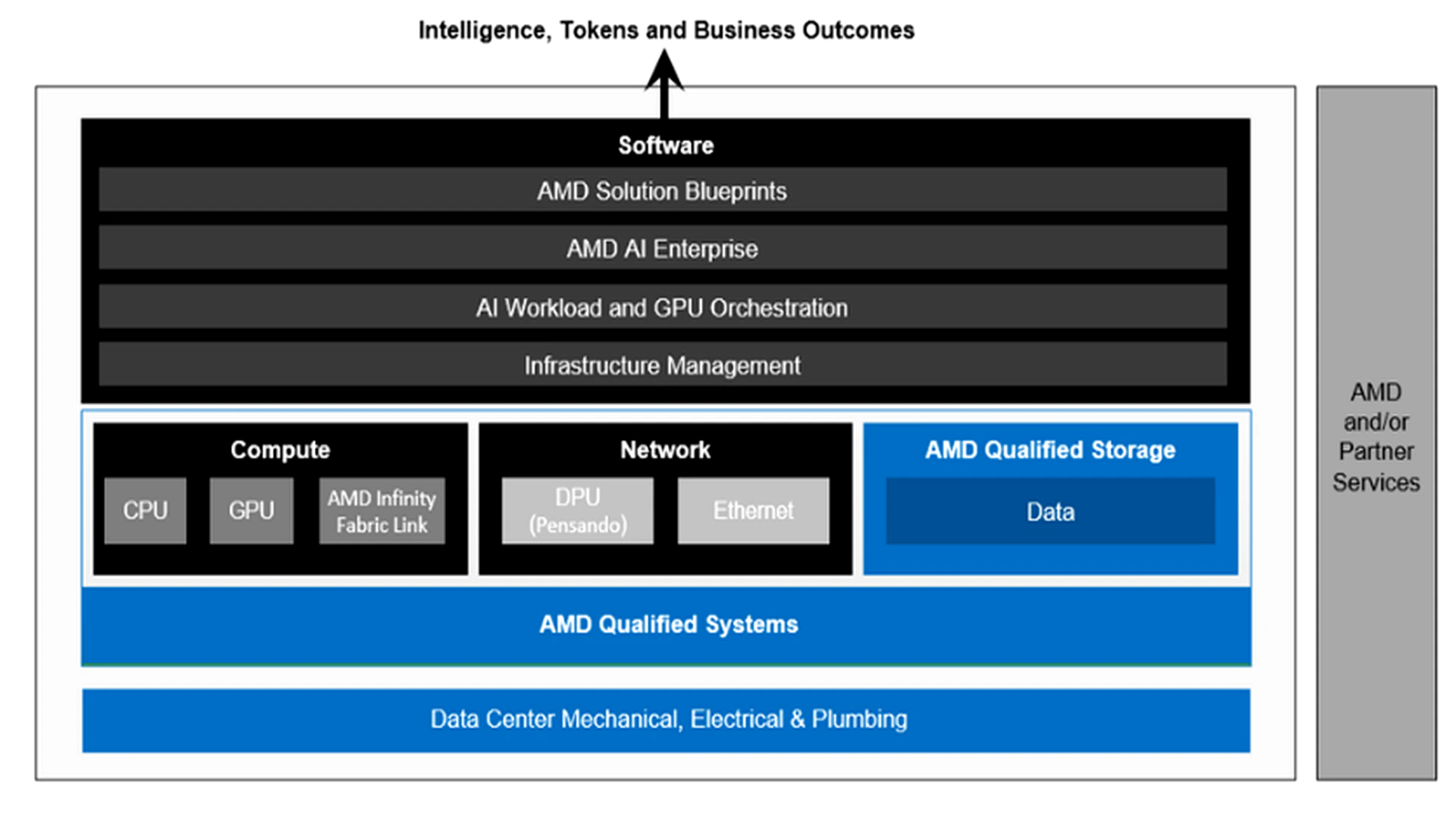

HCLTech and AMD combine their strengths to deliver an end-to-end AI solution stack that addresses these challenges holistically.

The joint solution modernizes AI environments across data centers and cloud platforms using CPU, GPU and adaptive computing technologies built on open standards.

HCLTech provides consulting, solution architecture, implementation and operational support, while AMD delivers high-performance, open and scalable compute platforms.

This unified approach enables enterprises to deploy AI across:

- Public cloud environments

- Neo-cloud providers

- On-prem data centers

- Edge platforms and AI-enabled PCs

Compatibility with existing x86 infrastructure ensures interoperability and smooth integration. Organizations can choose cloud-based AI to reduce CAPEX, on-prem AI to optimize OPEX, or adopt hybrid models to balance cost, performance and security to create right-sized, AI-ready environments.

The AI compute engine: Optimized for training, inference and edge

At the core of the offering is a comprehensive compute stack built on AMD technologies.

AMD EPYC™ processors power general-purpose data center and AI orchestration workloads with high core counts and strong performance characteristics.

AMD Instinct™ accelerators are purpose-built for AI training and inference in enterprise data centers, delivering the computational intensity required for large models.

For endpoint AI, AMD integrates NPUs through its XDNA architecture, enabling on-device AI processing for intelligent edge and client systems.

Advanced networking solutions, including Ultra Ethernet-based Pensando™ Pollara NICs and open rack scale-out designs, provide efficient, high-performance connectivity for modern AI workloads.

Together, these components create a scalable and workload-optimized AI infrastructure foundation.

Software and ecosystem: Open, modular and enterprise-ready

The solution extends beyond hardware with a robust software ecosystem.

AMD ROCm™, an open-source GPU computing stack, includes drivers, development tools and APIs optimized for Generative AI and HPC applications. Its open architecture simplifies code migration and supports rapid innovation.

The AMD Enterprise AI Suite further accelerates deployment through four modular components:

- Solution blueprints: Pre-validated enterprise designs that reduce engineering effort and accelerate production rollout.

- Inference microservices: Optimized containers with support for open models and OpenAI-compatible APIs, featuring intelligent hardware tuning.

- AI workbench: Development environments and prebuilt pipelines to streamline model fine-tuning and deployment.

- Resource manager: Intelligent GPU scheduling, distributed orchestration (including Ray integration) and telemetry-driven optimization to enhance utilization and cost control.

This modular architecture allows enterprises to adopt the full suite or selectively implement components based on their AI maturity and needs.

Industry-Wide Impact

The HCLTech + AMD solution supports AI use cases across multiple sectors. Here are some of them:

- BFSI: Fraud detection, risk modeling, compliance automation

- Retail: Recommendation engines, demand forecasting

- Manufacturing: Predictive maintenance, digital twins

- Healthcare: Diagnostics, clinical documentation

- Telecom: Network optimization, churn prediction

- Logistics and Energy: Route optimization, grid forecasting

- Public Sector and Media: Citizen services, personalization, content generation

By addressing infrastructure, software, orchestration and operational layers together, enterprises can deploy these use cases efficiently and securely.

AI Factory:

HCLTech and AMD offer AI Factory as a platform and service framework that helps businesses industrialize and scale AI across the enterprise, moving beyond isolated experiments toward production-grade AI adoption

Core Capabilities

- Unified AI Lifecycle Management: From data ingestion and model development to deployment, monitoring and ongoing optimization, all within a governed ecosystem.

- Infrastructure and Platform Services: Deployment of scalable AI infrastructure, including compute, storage and orchestration layers tailored for hybrid, cloud native or on-prem environments.

- Reusable Accelerators and IP: Libraries, blueprints and prebuilt frameworks to accelerate development and reduce engineering effort.

- Governance, Security and Compliance: Integrated mechanisms for enterprise-grade governance and risk control.

- Managed Operations: 24/7 support and lifecycle management for AI environments.

Business outcomes: Turning AI into measurable value

The HCLTech and AMD collaboration delivers clear executive-level benefits:

- Accelerated Time-to-Production

Integrated compute engines and prebuilt blueprints reduce development cycles and speed value realization. - Scalable AI Operations

Optimized orchestration and distributed scheduling support production-scale LLMs and enterprise AI workloads. - Open and Modular Architecture

Avoid vendor lock-in while benefiting from ecosystem innovation and flexibility. - Predictable Costs and Optimized TCO

Intelligent GPU utilization, quota management and performance tuning reduce idle resources and improve capacity planning. - Energy Efficiency and Performance Balance

Workload-optimized architectures ensure high performance while supporting sustainability goals.

Conclusion: From AI Ambition to enterprise-grade execution

AI success requires more than powerful hardware or advanced models; it demands a cohesive, scalable and open full-stack ecosystem supported by deep implementation expertise.

Through its strategic collaboration with AMD, HCLTech delivers a comprehensive AI solution stack that modernizes infrastructure, accelerates deployment and optimizes operational performance.

The result is an enterprise-ready AI platform that enables organizations to move beyond experimentation and transform AI investments into scalable, measurable and sustainable business outcomes.

To learn more about our partnership with AMD, visit our AMD Ecosystem Webpage