As the number of connected devices, i.e., devices that can connect to another system over the Internet, has leapfrogged in recent times, it has heralded new opportunities in the way we live and work. Indeed, it has also given us technological tools to limit the degradation of our environment and our planet, thus preserving our nature and natural resources for future generations. These interconnected devices have given rise to "Digitalization", a process where digital technologies are used to improve business processes, products and services. In the Commercial Real Estate industry, this has resulted in eco-friendly, people-oriented and super-efficient "smart" buildings. And it is achieved through a technology-driven solution that monitors and manages different equipment and devices within a commercial building, such as lighting, power, water, motors, etc., using a combination of sensors and other software.

So far, so good.

But how do you implement such digitalization? Here, we will discuss the blueprint of a digitalization solution, taking commercial buildings as an example. However, the good thing is that this solution is generic enough to be applied in various industries, if you follow the basic template. The architecture of any digitalization solution comprises four components: digital modeling of physical assets (or processes), collection of data, processing and storage of data and finally, generating insights that result in actionable outcomes.

Let’s take the example of a commercial building. What we want to achieve through the digitalization of a commercial building are

- Increased comfort for tenants and residents through smart lighting, automated temperature control and air flow.

- Improved building operations using predictive maintenance, thus reducing the possibility of asset failures (valves, motors, lighting, etc.).

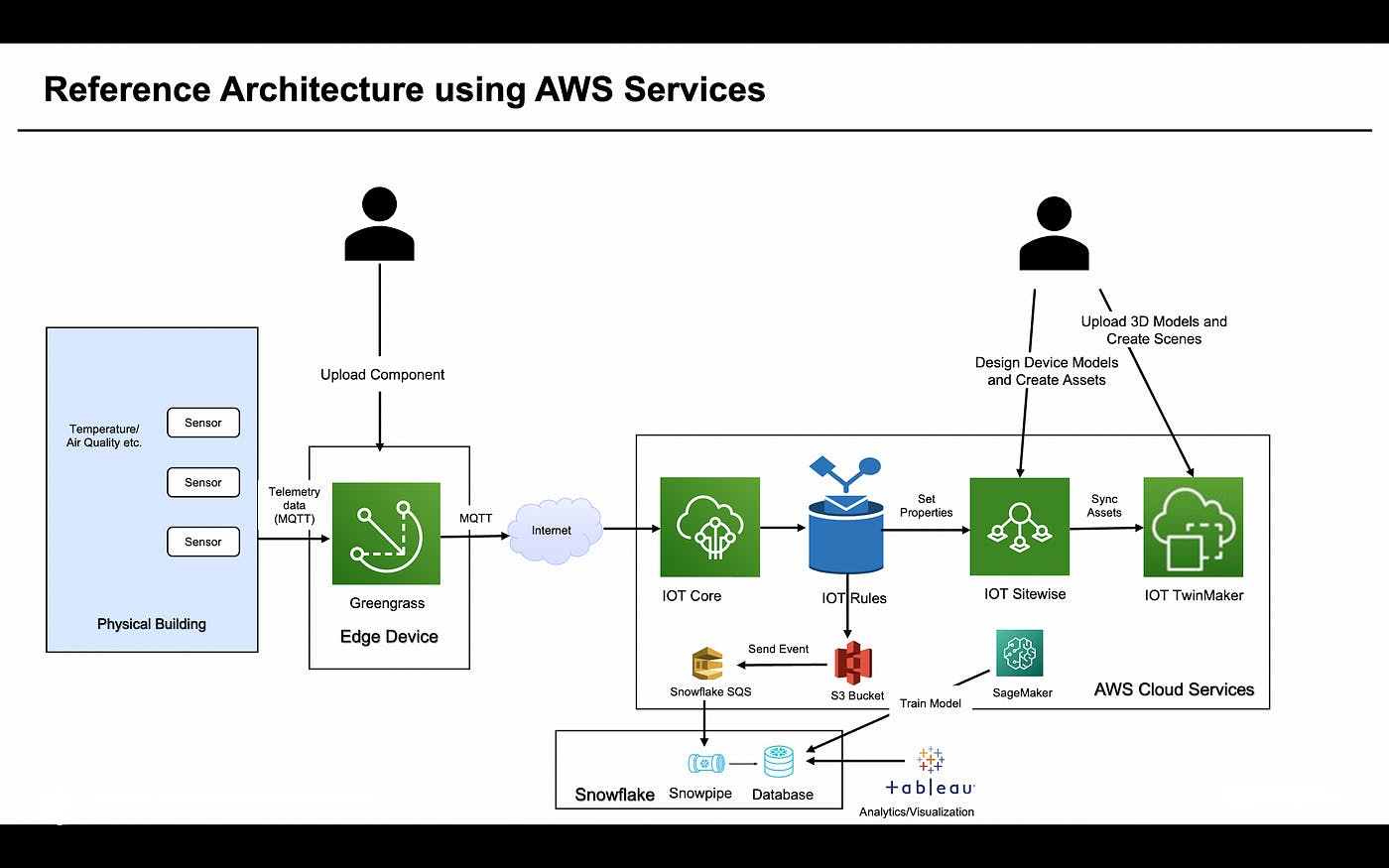

Figure 1 below depicts how the architecture of the solution may look, running on AWS infrastructure and leveraging various AWS services.

Figure 1 – Reference Architecture using AWS

The first step in the solution is to model the physical assets and/or business processes. This is done in AWS IoT SiteWise, wherein you build models of physical "things". While creating such digital models, you should incorporate concepts such as composition and inheritance, called Hierarchy in AWS IoT SiteWise. For example, in the case of our commercial building, a physical building comprises different floors. Each floor has a certain number of rooms and there is different equipment such as Lights, Pumps, and electric meters inside different rooms. So, you start with modelling physical assets that either exist independently on their own or are part of another asset. Then, you move onto modelling those physical assets that are composed of previous sets of assets. Physical assets may also have attributes that rarely change, for example, Model Number. They also have measurements that you would like to measure and monitor, for e.g., speed of a motor or the voltage of an electrical instrument.

Once the models have been designed, they are "instantiated" to create digital assets within AWS IoT SiteWise. These assets are now the true digital replicas of your physical "things". And the state of a digital asset at any given point in time represents the current state of the corresponding physical asset, so you get to see the current state of the physical "thing", using a remote computing device such as a browser.

The next step in the solution is data collection, which happens through different sensors embedded inside the physical "thing"—in our example, the commercial building. These sensors in the building capture different types of information—for example, ambient temperature, humidity, or electric power consumption—from the surrounding environment, machines, or people. All such data is then sent to a local Edge Device, using standard messaging protocols such as MQTT. These Edge Devices are Internet-enabled and they also run AWS Greengrass services. Your custom application components—where you write business logic such as filtering of messages, modification of message payload, application of local analytics and finally, sending these messages to AWS IoT Core—can be packaged as docker images and run on docker containers inside AWS Greengrass.

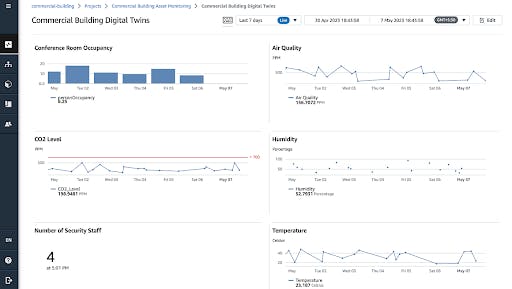

As the telemetry data from the sensors reaches AWS IoT Core, it is routed through AWS IoT Rules, wherein rules are defined to specify how the data is mapped to corresponding digital asset properties defined in step 1. This creates a time-stamped data stream that updates the digital asset properties in almost real-time. In order to view this time-stamped data, use the Monitoring Portal provided by AWS IoT SiteWise. Inside the Portal, create a dashboard to monitor various assets inside the building. Figure 2 below shows a dashboard that was created to monitor different aspects of our commercial building.

Figure 2 –Dashboard created on AWS SiteWise Monitoring Portal

We are not done yet.

While the monitoring dashboard gives you visibility into what’s happening within the commercial building, that’s not the be-all and end-all. Because our key objective is not only to monitor the telemetry data but also to take proactive measures by analyzing the data. To achieve this later goal, we have to first store this data in a data warehouse or data lake. For this purpose, we use Snowflake, which can store and analyze large volumes of both structured and unstructured data coming from sensors and other disparate systems. It also provides almost real-time data processing and the extraction of insights from the data.

The integration of Snowflake into our solution takes place in four steps. First, a rule is created in AWS IoT Core to route the telemetry data to an AWS S3 bucket. Second, create an external stage in Snowflake that maps to an AWS S3 bucket as the data location. Third, create a Snowpipe in Snowflake that loads data from the external stage to an internal database within Snowflake. Finally, configure the Event Notification of the AWS S3 bucket to route the event to the SQS queue of Snowpipe. What these four steps achieve is that as soon as new telemetry data is stored in an AWS S3 bucket, AWS triggers an event that sends a message to the AWS SQS queue being monitored by Snowpipe. Snowpipe then reads the data from the AWS S3 bucket and loads it into its own database. Further, Snowflake is then integrated with Tableau for data analytics and visualization through dashboards.

The next part of our solution involves creating an ML model in AWS SageMaker to identify patterns in telemetry data, such as anomaly detection, prediction of equipment failures, etc. This model is then continuously trained using data from Snowflake.

In the final part of our solution, we use AWS IoT TwinMaker to build digital twins of our commercial building and the equipment therein. For this, you have to create 3D models of the physical "things", upload them into TwinMaker and then overlay them on top of the digital assets you created in earlier steps. This is done by creating "Scenes" in TwinMaker. These scenes are now the visual representations of your physical building and can be used by your business users to analyze and improve the building's performance.

To conclude, AWS provides a plethora of services that can be tied together to build a highly scalable and intelligent digitalization solution for creating a smart building, helping you drive innovation that results in better service to tenants, improves the health and comfort of occupants and is highly sustainable.